Mutterings about star ratings are as much a part of the Fringe as plastic pint glasses. Some people think critics are too generous, some people too harsh (mainly about their own shows), and others think star ratings should be abolished altogether. James T. Harding does the sums and finds that, whatever you think it means, star-rating inflation is real.

If the Fringe community allows star inflation to continue at its current rate we’ll reach a tipping point for the whole system.

The Fringe is the largest arts festival in the world, which makes choosing what to see quite an intimidating prospect, especially for first-time visitors. Enter the star-rating system: your at-a-glance guide to what is worth seeing at the Fringe… except it seems like almost every show has a raft of four- and five-star ratings printed or stapled onto their flyers. The fact is, if everyone’s getting positive star ratings, star ratings are pretty meaningless.

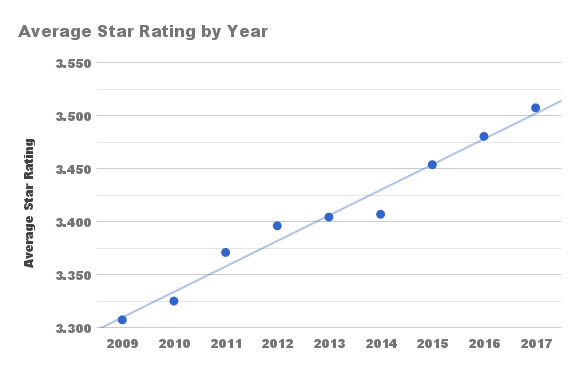

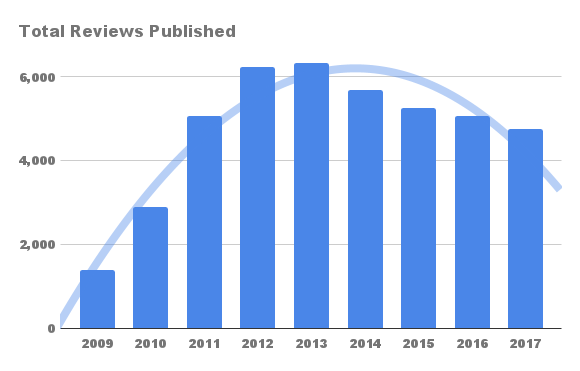

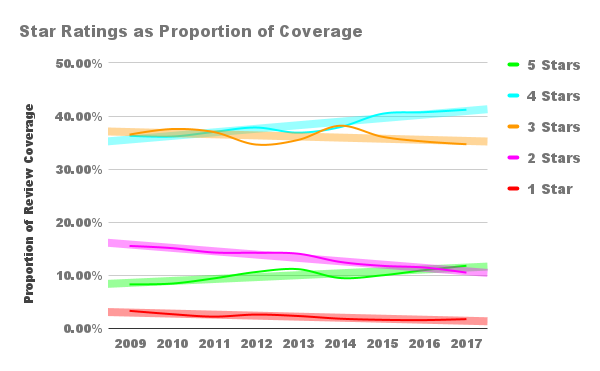

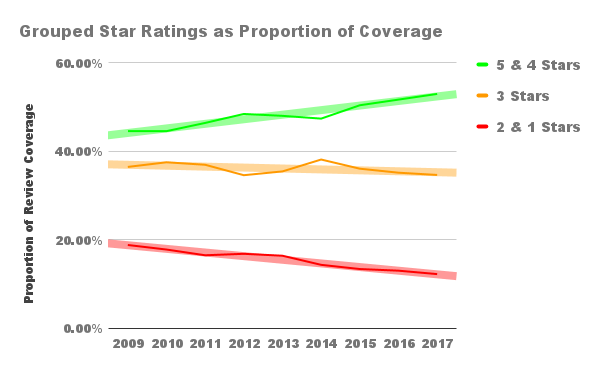

Using data aggregated on the List’s top-rated shows page, checked on August 26, I made some charts:

Figure 1

Figure 2

As you can see, the average star rating of reviews published at the festival (the data includes EIF shows as well as the Fringe) has risen from 3.30 to 3.51 over the last nine years. It wouldn’t be unreasonable to exclude 2009 and 2010 from the trend on the grounds that they have a much smaller sample size, but even if you do that the average still rises from 3.37 to 3.51. It isn’t as mammoth a rise as you might expect from the way drunk fringe lags complain about it, but star inflation is indisputably real.

There are, of course, different ratings and coverage policies at different publications. Some focus on debut shows, others go after safe bets. Some review in the context of the Fringe, others hold shows to the standard of London theatre. Some choose to publish only 3-star ratings and above, others have critical integrity. Such policies are not an important factor in star inflation, however, because they are mostly constant from year to year.

Average star rating is a bit of an abstract concept, especially as star ratings are generally looked at as an exponential rather than a linear scale. Naturally, I wanted to drill down into more detail by star-rating category:

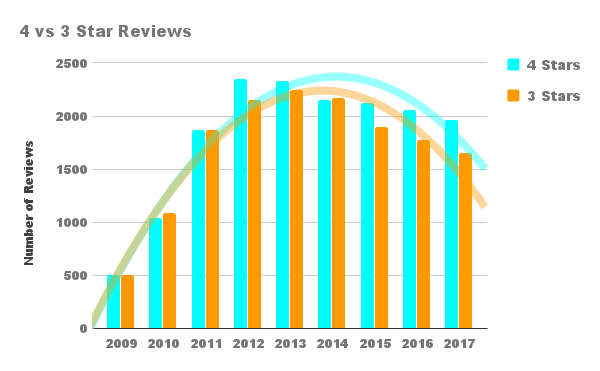

Figure 3

Figure 4

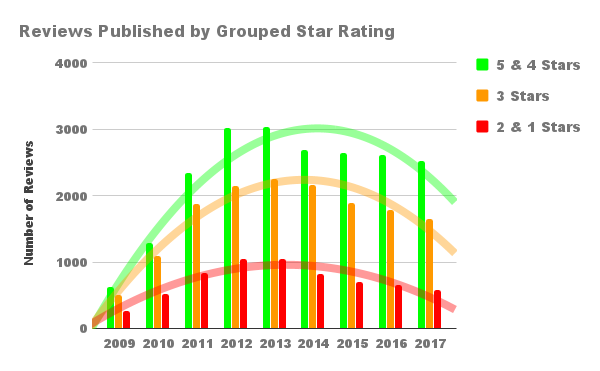

Figure 5

Figure 6

It turns out that the proportion of positive star ratings published has gone up, while negative ratings have fallen. The biggest shift has been directly on either side of the three-star rating, with four stars rising and two stars falling by five percentage points each.

Sometime in 2011, four-star reviews started to become more common than three-star reviews, both as a proportion of total coverage and in absolute terms. (That was the year Lyn Gardner was moved to write a condemnation of star inflation in the Guardian.) This stabilised in 2014, and now 4 stars is firmly the most commonly published rating overall.

This year, 53% of reviews published sported four- or five-star ratings. It’s so tempting to quip that above average is the new average, or that four stars is the new three stars, but sadly these statistics don’t tell us enough about the critical standard of the reviews for such appealing conclusions to be drawn.

As Richard Stamp, the editor of Fringe Guru, put it to me in a bar on the last night of the Fringe, it could simply be that reviewers are getting better at finding the best shows to see. One trend I’ve noticed in the last few years is for reviews to cluster around award winners and other buzz shows. This is natural, I suppose, in that readers are more interested in reading about the ‘winners’ of the Fringe. Reviewers too – if you’ll forgive my cynicism – are more interested in enjoying a good show than they are in sitting through a bad one. This coverage clustering contributes to star inflation twice over, however. Firstly, it increases the number of positive star ratings. Secondly, it decreases the number of negative star ratings given due to the opportunity cost of attending the buzz show.

A similar effect is caused by the rise of the returning show, one which has done well critically in a previous year and is back for more. Although some publications avoid these known-quantity shows, others embrace them. The result, of course, is yet more star inflation. It isn’t that these shows are being given higher ratings than they deserve, it’s that their inclusion increases the star-rating average as experienced by the audience.

Caveats aside, it isn’t that preposterous an idea that reviewers are getting more generous. Certainly, my personal impression of the Fringe over the last few years is that star-rating standards are falling across all publications.

High ratings are good for shows and publications to promote themselves in the short term, but if the Fringe community allows star inflation to continue at its current rate we’ll reach a tipping point for the whole system. If shows stop putting four stars on their fliers, we’ll know it’s too late.

Photo by Kim Traynor, used under Creative Common license.